mirror of

https://github.com/fatedier/frp.git

synced 2026-05-15 08:05:49 -06:00

[GH-ISSUE #1025] frps dashboard 无响应 #812

Labels

No labels

In Progress

WIP

WaitingForInfo

bug

doc

duplicate

easy

enhancement

future

help wanted

invalid

lifecycle/stale

need-issue-template

need-usage-help

no plan

proposal

pull-request

question

todo

No milestone

No project

No assignees

1 participant

Notifications

Due date

No due date set.

Dependencies

No dependencies set.

Reference: github-starred/frp#812

Loading…

Add table

Add a link

Reference in a new issue

No description provided.

Delete branch "%!s()"

Deleting a branch is permanent. Although the deleted branch may continue to exist for a short time before it actually gets removed, it CANNOT be undone in most cases. Continue?

Originally created by @guyskk on GitHub (Dec 28, 2018).

Original GitHub issue: https://github.com/fatedier/frp/issues/1025

Issue is only used for submiting bug report and documents typo. If there are same issues or answers can be found in documents, we will close it directly.

(为了节约时间,提高处理问题的效率,不按照格式填写的 issue 将会直接关闭。)

Use the commands below to provide key information from your environment:

You do NOT have to include this information if this is a FEATURE REQUEST

What version of frp are you using (./frpc -v or ./frps -v)?

0.22.0

What operating system and processor architecture are you using (

go env)?Ubuntu 16.04 x86_64 GNU/Linux

Configures you used:

frps.ini

frpc.ini

frpc-second.ini

Steps to reproduce the issue:

Describe the results you received:

运行1~2天后,dashboard无响应。

frps日志:

Describe the results you expected:

dashboard正常响应。

Additional information you deem important (e.g. issue happens only occasionally):

frps共打开5135个文件描述符:

将frpc-second重启后,frps不再retrying,但只要再访问一次 dashboard,frps便不断输出 retrying 日志。

Can you point out what caused this issue (optional)

@fatedier commented on GitHub (Dec 28, 2018):

检查是谁在连你的 dashboard,或把 dashboard 设置为仅内网可连接。

@guyskk commented on GitHub (Dec 28, 2018):

检查过了,没有其他人连dashboard,dashboard也只能内网访问

@fatedier commented on GitHub (Dec 28, 2018):

通过工具检查,这个日志每一条错误信息表示有一个新的连接建立请求。

@fatedier commented on GitHub (Dec 28, 2018):

你既然会使用命令行工具,简单排查下到底建立了哪些连接应该很容易,先自行分析下。

否则没有环境,没有详细信息,简单的一句话完全没有办法判断。

@guyskk commented on GitHub (Dec 28, 2018):

OK,我重启frps暂时解决了问题,我下次再仔细分析,看能不能复现。

@guyskk commented on GitHub (Jan 2, 2019):

上次重启时,我打开了frps debug日志,现在问题再次出现了,dashboard无法访问,反向代理的ssh能访问,但比正常情况慢几倍。

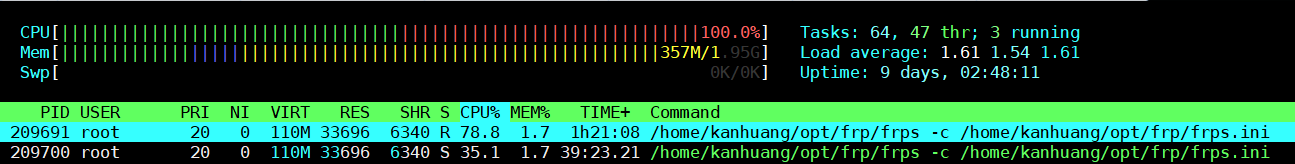

CPU使用情况:

网络连接情况,排除了约1000个8xxx端口监听:

日志:

没有任何报错:

日志内容(去除心跳日志):

现在 frps 还在运行,如果需要其他信息我可以继续提供,我不知道如何解决了,多谢!

@fatedier commented on GitHub (Jan 2, 2019):

你要看的是 7500 端口的连接。

另外你开启这么多端口,需要带宽足够,尽量使用简单的配置来排除问题。

@guyskk commented on GitHub (Jan 2, 2019):

所有连接我都列在上面了,7500端口没有连接,服务器上直接请求都没反应:

另外开的 8xxx 端口,基本都是空闲的,上面列出的连接里也能看到只有一条

TCP 10.176.13.48:8901->10.225.230.96:56262 (ESTABLISHED)。@fatedier commented on GitHub (Jan 2, 2019):

因为你设置的端口范围太多了,目前 dashboard 的 api 对这方面不能很好的支持,你可以将这些都去掉再尝试。这样的话,尽量不要使用获取所有数据的接口。

@guyskk commented on GitHub (Jan 2, 2019):

好吧,我先减少端口范围

@guyskk commented on GitHub (Jan 9, 2019):

已解决, 减少端口范围到100个后稳定运行。